Vision-guided Robotics

Smart robots with the power of sight: More capabilities and flexibility for a wide range of applications

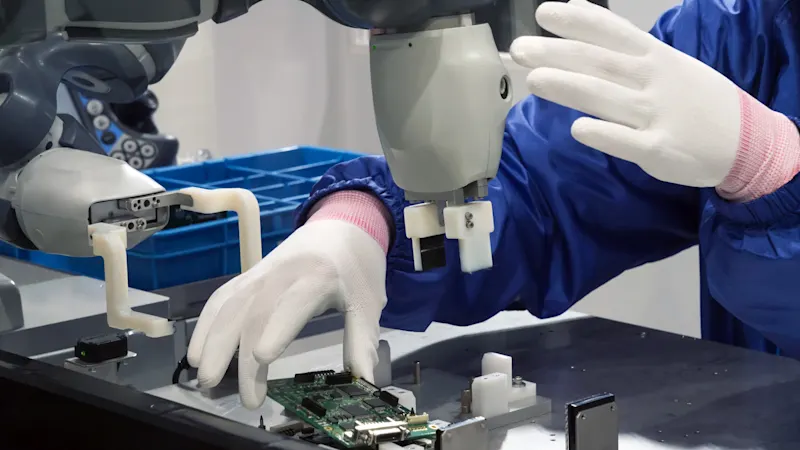

Robots perform tasks that are impossible or difficult for humans to accomplish. Computer vision gives robots "eyesight" and opens up almost unlimited application possibilities. Computer vision makes robots more flexible and helps expand their application areas. We offer you a customized vision solution at the best price/performance ratio.

Fast integration through compatibility

Compatible with KUKA, FANUC, Universal Robots, Schmalz, and many well-known brands in roboticsConformity with ROS and GenICam

Basler 2D and 3D cameras are ROS 1, ROS 2, and GenICam compliant for standardized, easy integrationPlug & play: USB 3.0 & GigE/5GigE

Standard interfaces with high data throughput, supported by industrial PCs and embedded systemsBroad portfolio

In addition to compatible hardware, we offer the appropriate software portfolio for image acquisition and processing

The power of sight: Smart robots through machine vision

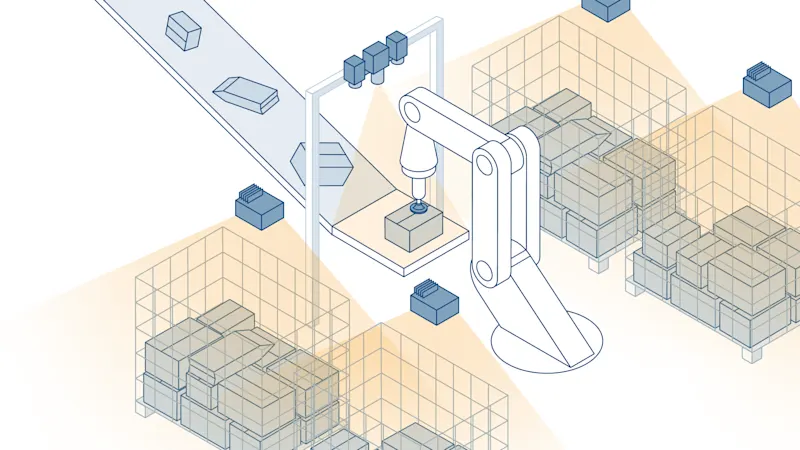

Modern industrial robots can do much more with image processing systems: they capture precise details and use them to control their movements. By evaluating the image data in real time, movements can be corrected. All of this expands the possible uses of robots in production and logistics.

More flexible processes thanks to machine vision

Even simple tasks, such as gripping components from a defined position, often fail without image processing if the components are not precisely positioned. Vision systems solve such problems effortlessly: cameras record the position of the components, calculate deviations, and pass on corrected 2D or 3D coordinates to the robot control.

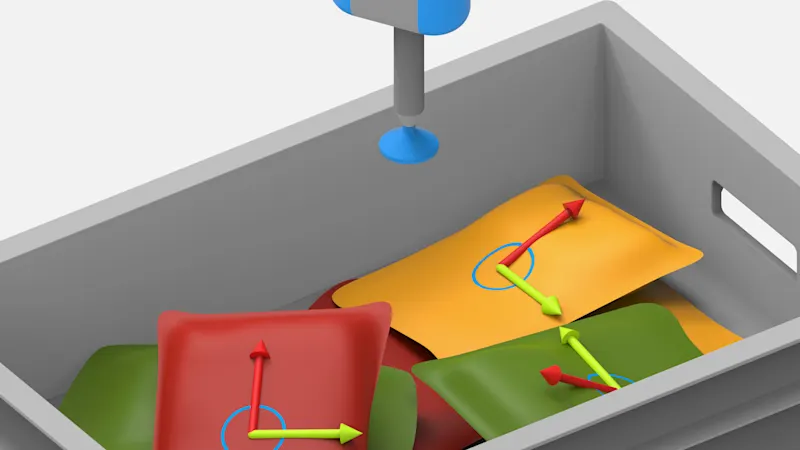

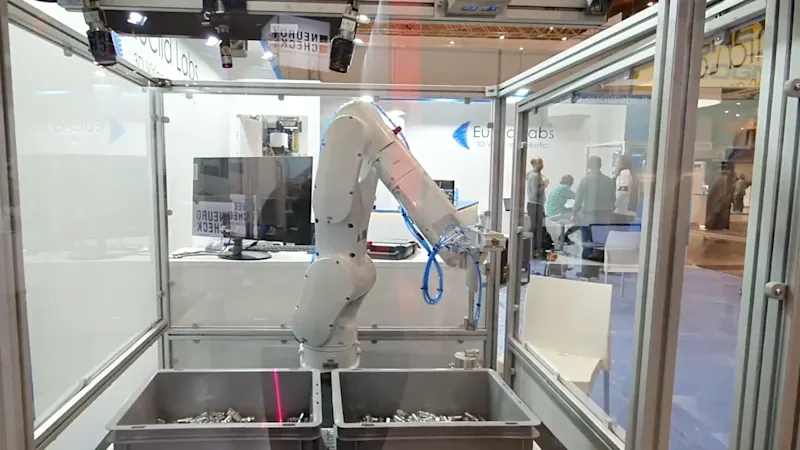

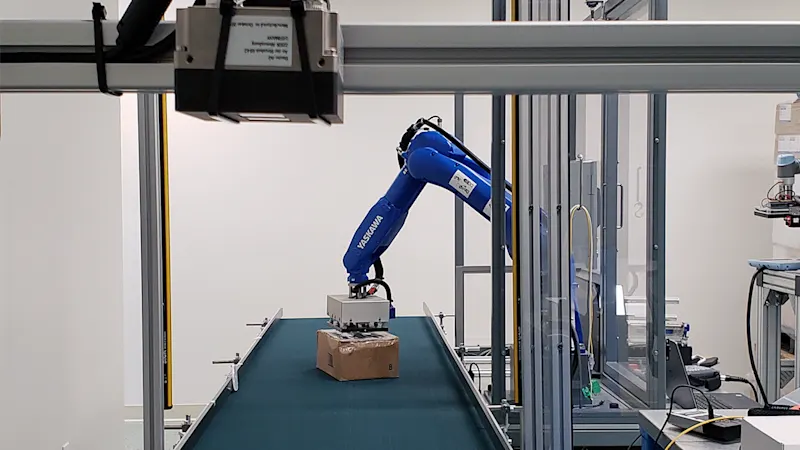

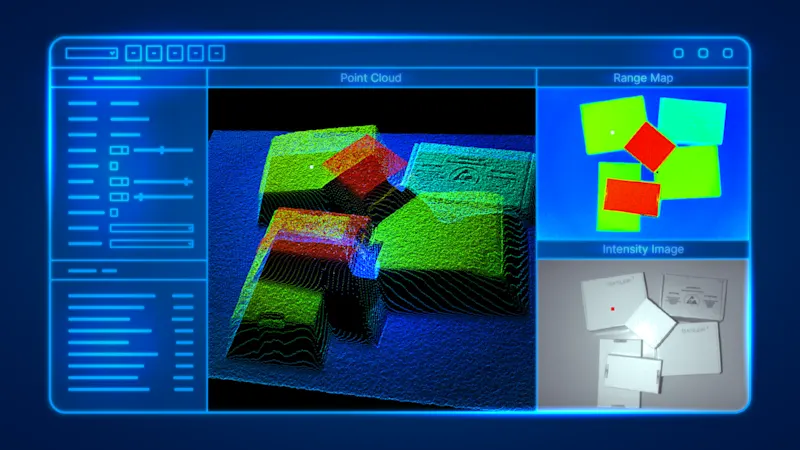

The supreme discipline is bin picking, in which disordered parts have to be gripped precisely. To do this, the vision system identifies the next tangible component, determines its exact 3D position, and transmits the data to the robot. Without image processing, this task would not be solvable.

When working with people, especially regarding cobots, vision systems also play a key role. They increase safety by avoiding collisions, thus protecting the health of human colleagues. At the same time, they reduce costs and downtime through more precise movements that prevent damage to workpieces and equipment.

The right vision system for your robotics application

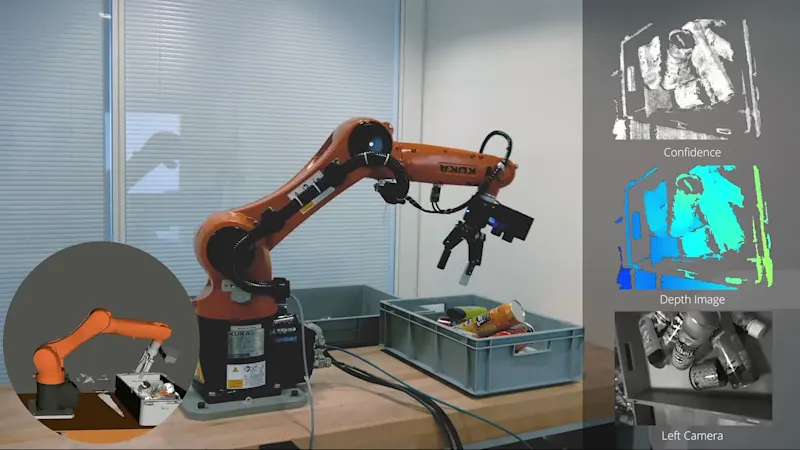

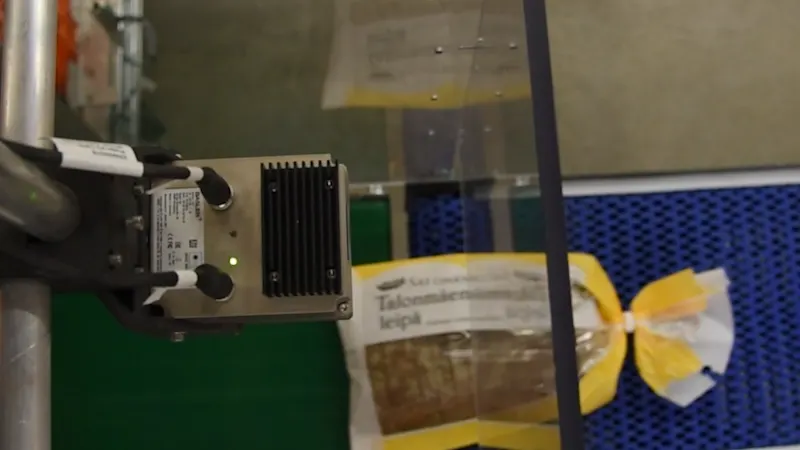

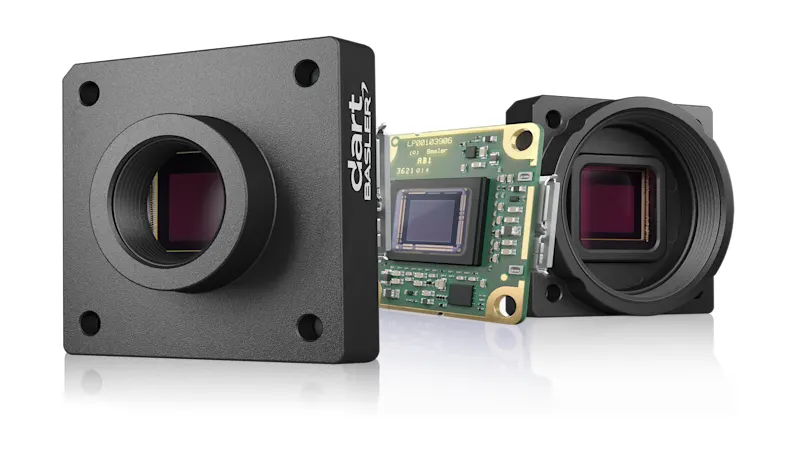

The selection and setup of a vision system are crucial to the success of your robotics application. A key factor is the positioning of the camera: it can either be permanently installed ("off-arm") or attached directly to the robot arm ("on-arm"). The latter requires lightweight, robust cameras with vibration-resistant cabling.

Another criterion is the choice between a conventional industrial camera and a smart camera. While industrial cameras offer the highest precision and speed thanks to external processing, smart cameras score points with integrated image evaluation. The final choice depends on the requirements of your application, such as precision, speed, and environmental conditions.

Next to the camera, lighting, optics, and cabling play a central role. Customized lighting and torsion-resistant cables ensure that your system works reliably—even under demanding conditions.

Coordinated components from a single source

With us, you will find software and hardware components for your smart computer vision system – all optimally matched to each other:

2D cameras with resolutions from VGA to 127 MP

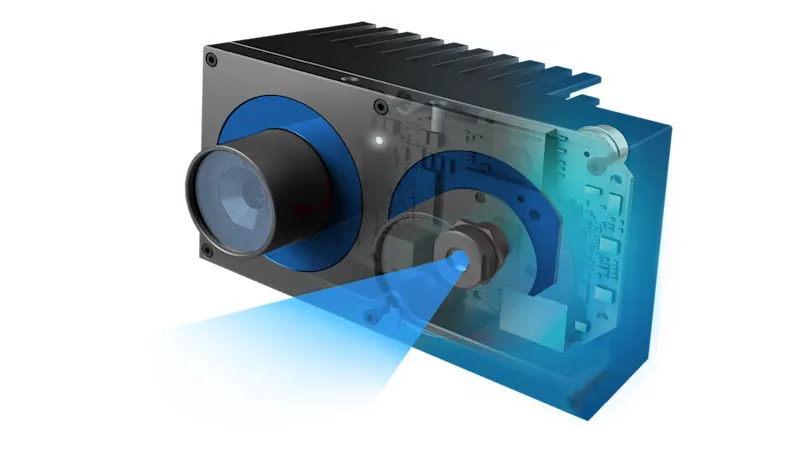

Time-of-Flight and Stereo cameras for cost-effective and smart 3D imaging

Wide range of lighting, easily integrated via Basler's pylon SDK

PC cards and frame grabbers matched to your vision technology

Carefully selected accessories such as lenses, drag chain compatible cables, and IP67 protective housing

Fast integration through system compatibility

Basler products have proven their compatibility with many robotics solutions: KUKA, FANUC, Universal Robot, Denso, and Techman as well as gripping systems from SCHMALZ and many other robotics brands work smoothly with our vision systems.

ROS 1 and 2 compatibility of Basler 2D and 3D cameras

Basler vision components integrated into many operating systems, e.g. from FANUC

Software connectors, e.g. for KUKA, Universal Robots, and Franka Emika

Contact us for more details on compatibility.

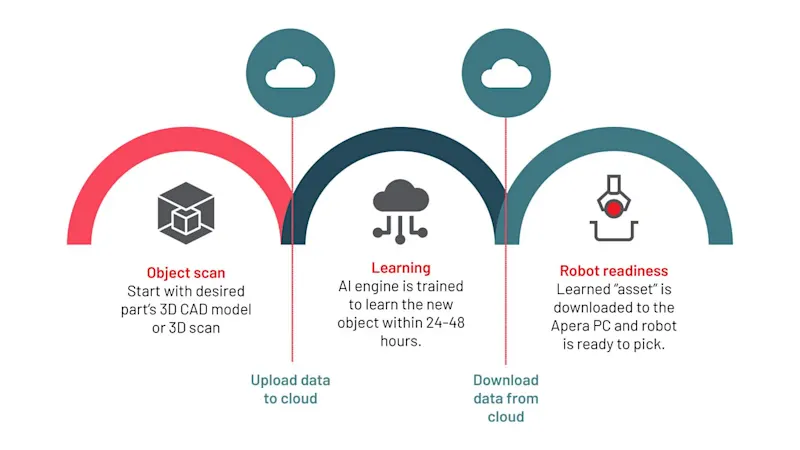

Software as the key to intelligent robotics systems

In addition to the right choice of vision hardware, software is crucial to ensuring "sighted" robot cells are implemented economically and efficiently. Robots and grippers often work with proprietary controls, meaning well-thought-out concepts are required for their seamless integration. Because of this, a significant part of project costs arise from development time. Therefore, powerful software solutions and intuitive programming tools are essential to master complex tasks quickly and efficiently.

3D application software for robotics

The individual software modules are specially developed for typical robotics applications, such as object detection, picking tasks, and navigation. They can be operated intuitively and activated for the respective application via plug-and-play. They enable low total system costs thanks to individually selectable software modules.

To the robotics application software

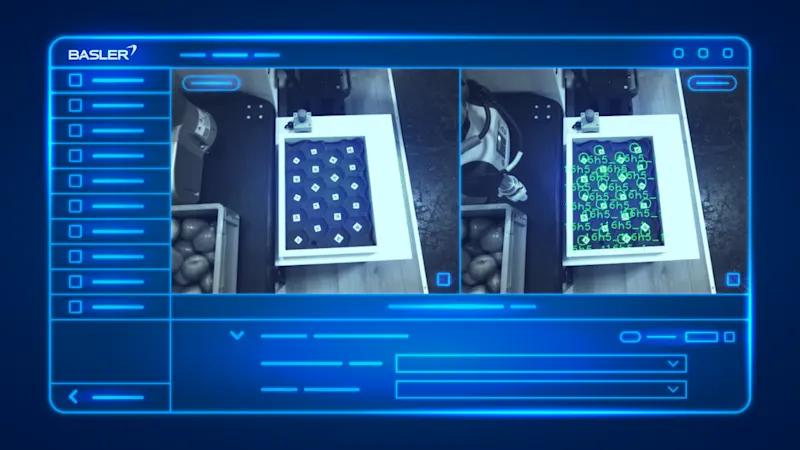

2D image processing as a complement to robot applications

The Basler pylon Software Suite can do much more than just configuration and image acquisition. It enables high-performance image processing functions with the integrated pylon vTools.

pylon SDK software is the programming interface for all camera models, designed to be beginner-friendly for high productivity and stable applications

pylon Viewer offers camera evaluation and powerful tools

pylon drivers & GenTL offer stable and certified drivers for Windows, Linux, macOS, and Android

pylon vTools enable image processing, such as: object positioning, measurement, or code recognition

Application examples in robotics

Real imaging systems successfully implemented to save time and money for a wide variety of applications. See for yourself how our customized solutions improve applications.

Most popular products

For efficient and reliable machine vision applications in this industry, the following Basler products are often the best choice: